preprocessing.scale, check these out | What is preprocessing scale?

What is preprocessing scale?

The preprocessing.scale() algorithm puts your data on one scale. This is helpful with largely sparse datasets. In simple words, your data is vastly spread out. For example the values of X maybe like so: X = [1, 4, 400, 10000, 100000]

What does preprocessing scale do in Python?

scale. Standardize a dataset along any axis. Center to the mean and component wise scale to unit variance.

Is scaling part of preprocessing?

preprocessing. scale() method is helpful in standardization of data points. It would divide by the standard deviation and substract the mean for each data point.

What is the difference between MinMaxScaler and StandardScaler?

StandardScaler follows Standard Normal Distribution (SND). Therefore, it makes mean = 0 and scales the data to unit variance. MinMaxScaler scales all the data features in the range [0, 1] or else in the range [-1, 1] if there are negative values in the dataset.

What is the difference between scale and StandardScaler?

StandardScaler removes the mean and scales the data to unit variance. The scaling shrinks the range of the feature values as shown in the left figure below.

What does Sklearn preprocessing do?

The sklearn. preprocessing package provides several common utility functions and transformer classes to change raw feature vectors into a representation that is more suitable for the downstream estimators. In general, learning algorithms benefit from standardization of the data set.

How do you preprocess data in Python?

To import these libraries, let’s type and run the code below.

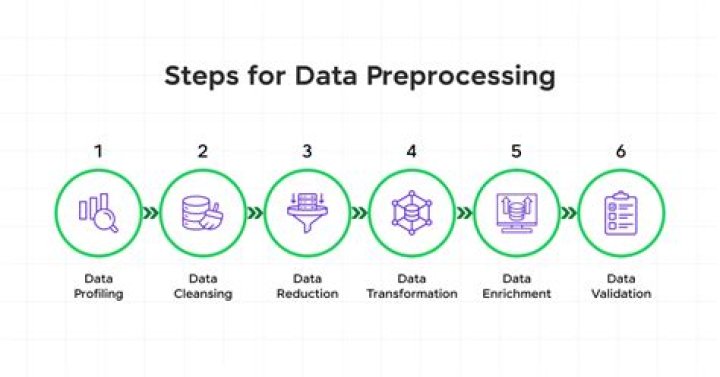

Step 1: Importing the libraries. Step 2: Import the dataset. Step 3: Taking care of the missing data. Step 4: Encoding categorical data. Step 5: Splitting the dataset into the training and test sets. Step 6: Feature scaling.

What does MIN MAX scaler do?

Transform features by scaling each feature to a given range. This estimator scales and translates each feature individually such that it is in the given range on the training set, e.g. between zero and one.

What does preprocessing normalize do?

Normalizer. Normalize samples individually to unit norm. Each sample (i.e. each row of the data matrix) with at least one non zero component is rescaled independently of other samples so that its norm (l1, l2 or inf) equals one.

How do you use StandardScaler?

Explanation:

Import the necessary libraries required. Load the dataset. Set an object to the StandardScaler() function.Segregate the independent and the target variables as shown above.Apply the function onto the dataset using the fit_transform() function.

Why do we use standard scaler?

StandardScaler removes the mean and scales each feature/variable to unit variance. This operation is performed feature-wise in an independent way. StandardScaler can be influenced by outliers (if they exist in the dataset) since it involves the estimation of the empirical mean and standard deviation of each feature.

What is standard scaler?

StandardScaler is the industry’s go-to algorithm. StandardScaler standardizes a feature by subtracting the mean and then scaling to unit variance. Unit variance means dividing all the values by the standard deviation.

Is MinMaxScaler a normalization?

Normalization refers to the rescaling of the features to a range of [0, 1], which is a special case of min-max scaling. To normalize the data, the min-max scaling can be applied to one or more feature columns.

What is StandardScaler in machine learning?

In Machine Learning, StandardScaler is used to resize the distribution of values so that the mean of the observed values is 0 and the standard deviation is 1.

Does scaling affect outliers?

By scaling data according to the quantile range rather than the standard deviation, it reduces the range of your features while keeping the outliers in.

What is difference between StandardScaler and Normalizer?

The main difference is that Standard Scalar is applied on Columns, while Normalizer is applied on rows, So make sure you reshape your data before normalizing it.

Why do we use robust scaler?

Scale features using statistics that are robust to outliers. This Scaler removes the median and scales the data according to the quantile range (defaults to IQR: Interquartile Range). In such cases, the median and the interquartile range often give better results.

What is L2 Normalisation?

The L2 norm calculates the distance of the vector coordinate from the origin of the vector space. As such, it is also known as the Euclidean norm as it is calculated as the Euclidean distance from the origin. The result is a positive distance value.